Post Grid #7

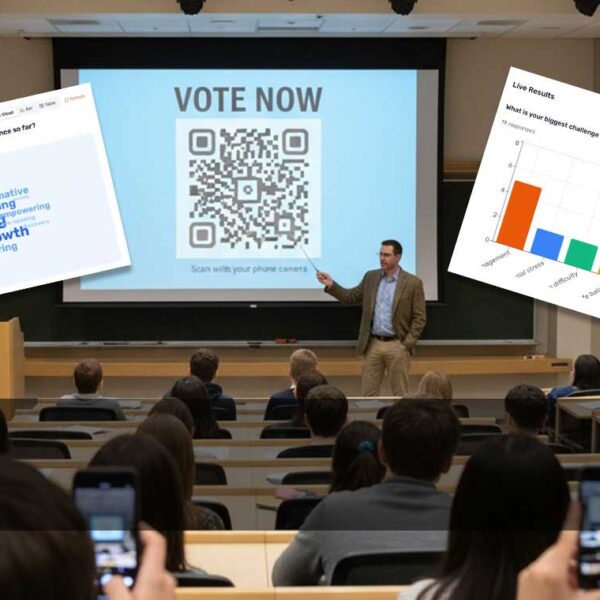

How to Run a Live Q&A Session, with Moderation and Upvoting

Steve Murch

Apr 22, 2026

Run an effective Q&A session at your next conference with this great new feature from PollQR.

How to Merge Calendars (iCal) Automatically

Apr 8, 2026

Shared Vacation Home Agreement Template

Apr 1, 2026