Engines of Wow: Part II: Deep Learning and The Diffusion Revolution, 2014-present

In Part I of this series on AI-generated art, we introduced how deep learning systems can be used to “learn” from a well-labeled dataset. In other words, algorithmic tools can “learn” patterns from data to reliably predict or label things. Now on their way to being “solved” via better and better tweaks and rework, these predictive engines are magical power-tools with intriguing applications in pretty much every field.

Here, we’re focused on media generation, specifically images, but it bears a note that many of the same basic techniques described below can apply to songwriting, video, text (e.g., customer service chatbots, poetry and story-creation), financial trading strategies, personal counseling and advice, text summarization, computer coding and more.

Generative AI in Art: GANs, VAEs and Diffusion Models

From Part I of this series, we know at a high level how we can use deep-learning neural networks to predict things or add meaning to data (e.g., translate text, or recognize what’s in a photo.) But we can also use deep learning techniques to generate new things. This type of neural network system, often comprised of multiple neural networks, is called a Generative Model. Rather than just interpreting things passively or searching through existing data, AI engines can now generate highly relevant and engaging new media.

How? The three most common types of Generative Models in AI are Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs) and Diffusion Models. Sometimes these techniques are combined. They aren’t the only approaches, but they are currently the most popular. Today’s star products in art-generating AI are Midjourney by Midjourney.com (Diffusion-based) DALL-E by OpenAI (VAE-based), and Stable Diffusion (Diffusion-based) by Stability AI. It’s important to understand that each of these algorithmic techniques were conceived just in the past 6 years or so.

My goal is to describe these three methods at a cocktail-party chat level. The intuition behind them are incredibly clever ways of thinking about the problem. There are lots of resources on the Internet which go much further into each methodology, listed at the end of each section.

Generative Adversarial Networks

The first strand of generative-AI models, Generative Adversarial Networks (GANs), have been very fruitful for single-domain image generation. For instance, visit thispersondoesnotexist.com. Refresh the page a few times.

Each time, you’ll see highly* convincing images like this, but never the same one twice:

As the domain name suggests, these people do not exist. This is the computer creating a convincing image, using a Generative Adversarial Network (GAN) trained to construct a human-like photograph.

Note that often, it only renders half of one’s eyeglasses. This GAN doesn’t really understand the concept of “glasses,” simply a series of pixels that need to be adjacent to one another.

Generative Adversarial Networks were introduced in a 2014 paper by Ian Goodfellow et al. That was just eight years ago!

The basic idea is that you have two deep-learning neural networks: a Generator and a Discriminator. You can think of them like a Counterfeiter and a Detective respectively. One Deep Learning model, serving as the “Discriminator” (Detective), learns to distinguish between genuine articles and counterfeits. It penalizes the generator for producing implausible results. Meanwhile, a Generator model learns to “generate” plausible data, which, if it “fools” the discriminator, becomes negative training data for the Discriminator. They play a zero-sum game against each other (thus it’s “adversarial”) thousands and thousands of times, and with each adjustment to the Generator and Discriminator’s weights and attributes, the Generator gets better and better at “learning” how to construct something to fool the Discriminator, and the Discriminator gets better and better at detecting fakes.

The whole system looks like this:

GANs have delivered pretty spectacular results, but in fairly narrow domains. For instance, GANs have been pretty good at mimicking artistic styles (called “Neural Style Transfer“) and Colorizing Black and White Images.

GANs are cool and a major area of generative AI research.

More reading on GANs:

- Generative Adversarial Networks, an Overview: Creswell et al.

- Overview of GAN Structure, Google

- Video Overview: Generative Adversarial Networks, Computerphile

Variational Autoencoders (VAE)

An encoder can be thought of as a compressor of data, and a decompressor, something which does this opposite. You’ve probably compressed an image down to a smaller size without losing recognizability. It turns out you can use AI models to compress an image. Data scientists call this reducing its dimensionality.

What if you built two neural network models, an Encoder and a Decoder? It might look like this, going from x, the original image, to x’, the “compressed and then decompressed” image:

So conceptually, you could train an Encoder neural network to “compress” images into vectors, and then a Decoder neural network to “decompress” the image back into something close to the original.

Then, you could consider the red “latent space” in the middle as basically the rosetta stone for what a given image means. Run that algorithm numerous times over multiple images, encoding it with the text of the labeled images, and you would end up with the condensed encoding of how to render various images. If you did this across many, many images and subjects, these numerous red vectors would overlap in n-dimensional space, and could be sampled and mixed and then run through the decoder to generate images.

With some mathematical tricks (specifically, forcing the latent variables in red to conform to a normal distribution), you can build a system which can generate images that never existed before, but which have some very similar properties to the dataset which was used to train the encoder.

More reading on VAEs:

- Understanding Variational Autoencoders, Towards Data Science

- Variational AutoEncoders – GeeksforGeeks

2015: “Diffusion” Arrives

Is there another method entirely? What else could you do with a deep learning system which can “learn” how to predict things?

In March 2015, a revolutionary paper came out from researchers Sohl-Dickstein, Weiss, Maheswaranathan and Ganguli. It was inspired by the physics of non-equilibrium systems: for instance, dropping a drop of food coloring into a glass of water.

Imagine you saw a film of that process of “destruction” of a pure glass of water, and could stop it frame by frame. Could you build a neural network to reliably predict what a video-shown-in-reverse might look like?

Let’s think about a massive training set of animal images. Imagine you take an image in your training dataset, and create multiple copies of the image, each time systematically adding graphic “noise” to it. Step by step, more noise is added to your image (x), via what mathematicians call a Markov chain (incremental steps.) You apply a normally-distributed distortion, let’s say, Gaussian Blur.

In a forward direction, from left to right, it might look something like this. At each step from left to right, you’re going from data (the image) to pure noise:

But here’s the magical insight behind Diffusion models. Once you’ve done this, what if you trained a deep learning model to try to predict frames in the reverse direction? Could you predict a “de-noised” image X(t) from its more noisier version, X(t+1)? Could you could read each step backward, from right to left, and try to predict the best way to remove noise at each step?

This was the insight in the 2015 paper, albeit with much more mathematics behind it. It turns out you can train a deep learning system to learn how to “undo” noise in an image, with pretty good results. For instance, if you input the pure-noise image in the last step, x(T), and train a deep learning network that its output should be the previous step x(T-1), and do this over and over again with many images, you can “train” a deep learning network to subtract noise in an image, all the way back to an original image.

Do this enough times, with enough terrier images, say. And then, ask your trained model to divine a “terrier” from random noise. Gradually, step by step, it removes noise from an image to synthesize a “terrier”, like this:

Images generated from the current Midjourney model:

Wow! Just slap “No One Hates a Terrier” on any of these images above, print 100 t-shirts, and sell it on Amazon. Profit! I’ll touch on some of the legal and ethical controversies and ramifications in the final post in this series.

Training the Text Prompts: Embeddings

How did Midjourney know to produce a “terrier”, and not some other object or scene or animal?

This relied upon another major parallel track in deep learning: natural language processing. In particular, word “embeddings” can be used to get from keywords to meanings. And during the image model training, these embeddings were applied by Midjourney to enhance each noisy-image with meaning.

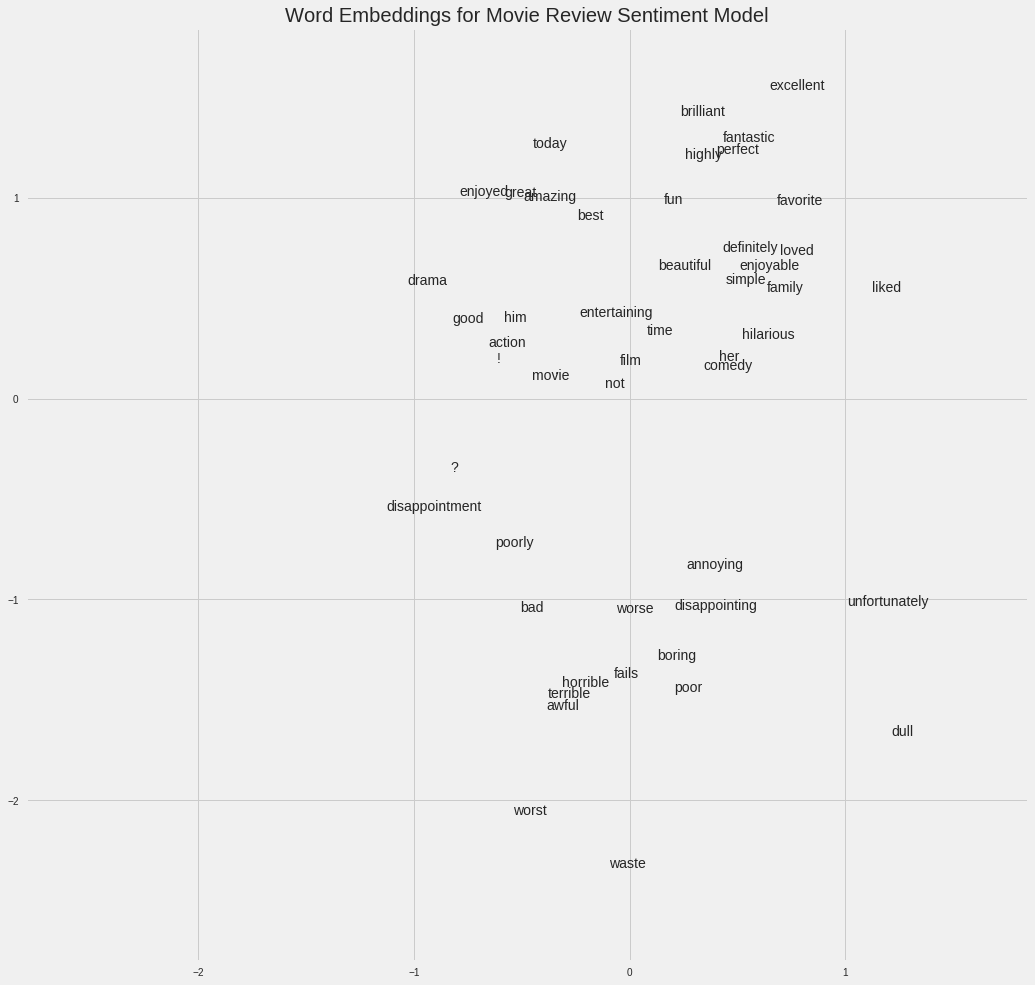

An “embedding” is a mapping of a chunk of text into a vector of continuous numbers. Think about a word as a list of numbers. A textual variable could be a word or a node in a graph, or a relation between nodes in a graph. By ingesting massive amounts of text, you can train a deep learning network to understand relationships between words and entities, and numerically pull out how closely associated some words and phrases are with others. They can be used to cluster together the sentiment of an expression in mathematical terms a computer can appear to understand. For instance, embedding models are now able to interpret semantics and relationships between words, like “royalty + woman – man = queen.”

An example on Google Colab took a vocabulary of 50,000 words in a collection of movie reviews, and learned over 100 different attributes from words used with them, based on their adjacency to one another:

Source: Movie Sentiment Word Embeddings

So, if you simultaneously injected into the “de-noising” diffusion-based learning process the information that this is about a “dog, looking up, on white background, terrier, smiling, cute,” you can get a deep learning network to “learn” how to go from random noise (x(T)) to a very faint outline of a terrier (x(T-1)), to even less faint (x(T-2)) and so on, all the way back to x(0). If you do this over thousands of images, and thousands of keyword embeddings, you end up with a neural network that can construct an image from some keywords.

Incidentally, researchers have found that about T=1000 is about all you need in this process, but millions of input images and enormous amounts of computing power are needed to learn how to “undo” noise at high resolution.

Let’s step back a moment to note that this revelation about Diffusion Models was only really put forward in 2015, and improved upon in 2018 and 2020. So we are just at the very beginning of understanding what might be possible here.

In 2021, Dhariwal and Nichol convincingly note that diffusion models can achieve image quality superior to the existing state-of-the-art GAN models.

Up next, Part III: Ramifications and Questions

That’s it for now. In the final Part III of Engines of Wow, we’ll explore some of the ramifications, controversies and make some predictions about where this goes next.