Engines of Wow: AI Art Comes of Age

While most of us were focused on Ukraine, the midterm elections, or simply returning to normal as best we can, Artificial Intelligence (AI) took a gigantic leap forward in 2022. Seemingly all of a sudden, computers are now eerily capable of human-level creativity. Natural language agents like GPT-3 are able to carry on an intelligent conversation. GitHub CoPilot is able to write major blocks of software code. And new AI-assisted art engines with names like Midjourney, DALL-E and Stable Diffusion delight our eyes, but threaten to disrupt entire creative professions. They raise important questions about artistic ownership, derivative work and compensation.

In this three-part blog series, I’m going to dive in to the brave new world of AI-generated art. How did we get here? How do these engines work? What are some of the ramifications?

This series is divided into three parts:

- Part I: The Artists in the Machine, 1950-2020

- Part II: The Diffusion Revolution, 2015+

- Part III: Ramifications and Questions

[featured image above: “God of Storm Clouds” created by Midjourney AI algorithm]

But first, why should we care? What kind of output are we talking about?

Let’s try one of the big players, the Midjourney algorithm. Midjourney lets you play around in their sandbox for free for about 25 queries. You can register for free at Midjourney.com; they’ll invite you to a Discord chat server. After reading a “Getting Started” agreement and accepting some terms, you can type in a prompt. You might go with: “/imagine portrait of a cute leopard, beautiful happy, Gryffindor outfit, super detailed, hyper realism.”

Wait about 60 seconds, choose one of the four samples generated for you, click the “upscale” button for a bigger image, and voila:

The Leopard of Gryffindor was created without any human retouching. This is final Midjourney output. The algorithm took the text prompt, and then did all the work.

I look at this image, and I think: Stunning.

Looking at it, I get the kind of “this changes everything” feeling, like the first time I browsed the world-wide web, spoke to Siri or Alexa, rode in an electric vehicle, or did a live video chat with friends across the country for pennies. It’s the kind of revolutionary step-function that causes you to think “this will cause a huge wave of changes and opportunities,” though it’s not even clear what they all are.

Are artists, graphic designers and illustrators doomed? Will these engines ultimately help artists or hurt them? How will the creative ecosystem change when it becomes nearly free to go from idea to visual image?

Once mainly focused at just processing existing images, computers are now extremely capable at generating brand new things. Before diving into a high-level overview of these new generative AI art algorithms, let me emphasize a few things: First, no artist has ever created exactly the above image before, nor will it likely be generated again. That is, Midjourney and its competitors (notably DALL-E and Stable Diffusion) aren’t search engines: they are media creation engines.

In fact, if you typed this same exact prompt into Midjourney again, you’ll get an entirely different image, yet one which also is likely to deliver on the prompt fairly well.

There is an old joke within Computer Science circles that “Artificial Intelligence is what we call things that aren’t working yet.” That’s now sounding quaint. AI is all around us, making better and better recommendations, completing sentences, curating our media feeds, “optimizing” the prices of what we buy, helping us with driving assistance on the road, defending our computer networks and detecting spam.

Part I: The Artists in the Machine, 1950-2015+

How did this revolutionary achievement come about? Two ways, just as bankruptcy came about for Mike Campbell in Hemingway’s The Sun Also Rises: First gradually. Then suddenly.

Computer scientists have spent more than fifty years trying to perfect art generation algorithms. These five decades can be roughly divided into two distinct eras, each with entirely different approaches: “Procedural” and “Deep Learning.” And, as we’ll see in Part II, the Deep-Learning era had three parallel but critical deep learning efforts which all converged to make it the clear winner: Natural Language, Image Classifiers, and Diffusion Models.

But first, let’s rewind the videotape. How did we get here?

Procedural Era: 1970’s-1990’s

If you asked most computer users, the naive approach to generating computer art would be to try to encode various “rules of painting” into software programs, via the very “if this then that” kind of logic that computers excel at. And that’s precisely how it began.

In 1973, American computer scientist Harold Cohen, resident at Stanford University’s Artificial Intelligence Laboratory (SAIL) created AARON, the first computer program dedicated to generating art. Cohen was actually an accomplished, talented artist and a computer scientist. He thought it would be intriguing to try to “teach” a computer how to draw and paint.

His thinking was to encode various “rules about drawing” into software components and then have them work together to compose a complete piece of art. Cohen relied upon his skill as an exceptional artist, and coded his own “style” into his software.

AARON was an artificial intelligence program first written in the C programming language (a low level language compiled for speed), and later LISP (a language designed for symbolic manipulation.) AARON knew about various rules of drawing, such as how to “draw a wavy blue line, intersecting with a black line.” Later, constructs were added to combine these primitives together to “draw an adult human face, smiling.” By 1995, Cohen added rules for painting color within the drawn lines.

Though there were aspects of AARON which were artificially intelligent, by and large computer scientists call his a procedural approach. Do this, then that. Pick up a brush, pick an ink color, and draw from point A to B. Construct an image from its components. Join the lines. And you know what? after a few decades of work, Cohen created some really nice pieces, worthy of hanging on a wall. You can see some of them at the Museum of Computer History in Menlo Park, California.

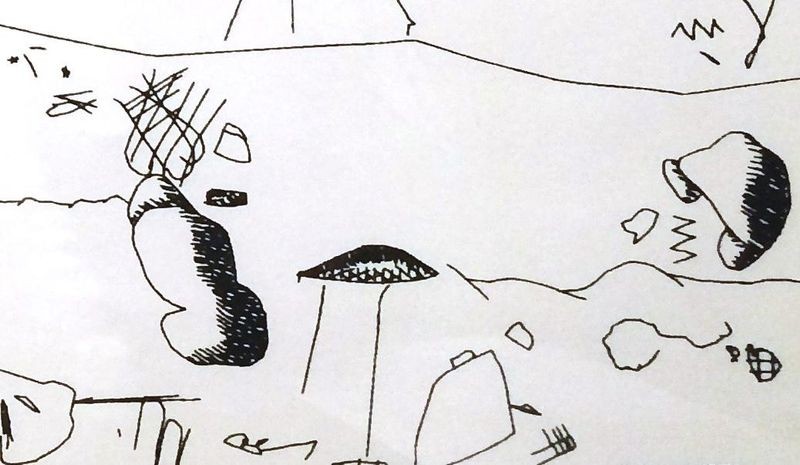

In 1980, AARON was able to generate this:

By 1995, Cohen had encoded rules of color, and AARON was generating images like this:

Just a few months ago, other attempts at AI-generated art were flat-looking and derivative, like this image from 2019:

Twenty seven years after AARON’s first AI-generated color painting, algorithms like Midjourney would be quickly rendering photorealistic images from text prompts. But to accomplish it, the primary method is completely different.

Deep Learning Era (1986-Present)

Algorithms which can create photorealistic images-on-demand are the culmination of multiple parallel academic research threads in learning systems dating back several decades.

We’ll get to the generative models which are key to this new wave of “engines of wow” in the next post, but first, it’s helpful to understand a bit about their central component: neural networks.

Since about 2000, you have probably noticed everyday computer services making massive leaps in predictive capabilities; that’s because of neural networks. Turn on Netflix or YouTube, and these services will serve up ever-better recommendations for you. Or, literally speak to Siri, and she will largely understand what you’re saying. Tap on your iPhone’s keyboard, and it’ll automatically suggest which letters or words might follow.

Each of these systems rely upon trained prediction models built by neural networks. And to envision them, a branch of computer scientists and mathematicians had to radically shift their thinking from the procedural approach. A branch of them did so first in the 1950’s and 60’s, and then again in a machine-learning renaissance which began in earnest in the mid-1980’s.

The key insight: these researchers speculated that instead of procedural coding, perhaps something akin to “intelligence” could be fashioned from general purpose software models, which would algorithmically “learn” patterns from a massive body of well-labeled training data. This is the field of “machine learning,” specifically supervised machine learning, because it’s using accurately pre-labeled data to train a system. That is, rather than “Computer, do this step first, then this step, then that step”, it became “Computer: learn patterns from this well-labeled training dataset; don’t expect me to tell you step-by-step which sequence of operations to do.”

The first big step began in 1958. Frank Rosenblatt, a researcher at Cornell University, created a simplistic precursor to neural networks, the “Perceptron,” basically a one-layer network consisting of visual sensor inputs and software outputs. The Perceptron system was fed a series of punchcards. After 50 trials, the computer “taught” itself to distinguish those cards which were marked on the left from cards marked on the right. The computer which ran this program was a five-ton IBM 704, the size of a room. By today’s standards, it was an extremely simple task, but it worked.

A single-layer perceptron is the basic component of a neural network. A perceptron consists of input values, weights and a bias, a weighted sum and activation function:

Rosenblatt described it as the “first machine capable of having an original idea.” But the Perceptron was extremely simplistic; it merely added up the optical signals it detected to “perceive” dark marks on one side of the punchcard versus the other.

In 1969, MIT’s Marvin Minsky, whose father was an eye surgeon, wrote convincingly that neural networks needed multiple layers (like the optical neuron fabric in our eyes) to really do complex things. But his book Perceptrons, though well-respected in hindsight, got little traction at the time. That’s partially because during these intervening decades, the computing power required to “learn” more complex things via multi-layer networks were out of reach computationally. But time marched on, and over the next three decades, computing power, storage, languages and networks all improved dramatically.

From the 1950’s through the early 1980’s, many researchers doubted that computing power would be sufficient for intelligent learning systems via a neural network style approach. Skeptics also wondered if models could ever get to a level of specificity to be worthwhile. Early experiments often “overfit” the training data and simply output the input data. Some would get stuck on local maxima or minima from a training set. There were reasons to doubt this would work.

And then, in 1986, Carnegie Mellon Professor Geoffrey Hinton, whom many consider the “Godfather of Deep Learning” (go Tartans!), demonstrated that “neural networks” could learn to predict shapes and words by statistically “learning” from a large, labeled dataset. Hinton’s revolutionary 1986 breakthrough was the concept of “backpropagation.” This adds both multiple layers to the model (hidden layers), and also iterations through the neural network model using the output of one or more mathematical functions to adjust weights to minimize “loss” or distance from the expected output.

This is rather like the golfer who adjusts each successive golf swing, having observed how far off their last shots were. Eventually, with enough adjustments, they calculate the optimal way to hit the ball to minimize its resting distance from the hole. (This is where terms like “loss function” and “gradient descent” come in.)

In 1986-87, around the time of the 1986 Hinton-Rumelhart-Williams paper on Backpropagation, the whole AI field was in flux between these procedural and learning approaches, and I was earning a Masters in Computer Science at Stanford, concentrating in “Symbolic and Heuristic Computation.” I had classes which dove into the AARON-style type of symbolic, procedural AI, and a few classes touching on neural networks and learning systems. (My masters thesis was in getting a neural network to “learn” how to win the Tower of Hanoi game, which requires apparent backtracking to win.)

In essence, you can think of a neural network as a fabric of software-represented units (neurons) waiting to soak up patterns in data. The methodology to train them is: “here is some input data and the output I expect, learn it. Here’s some more input and its expected output, adjust your weights and assumptions. Got it? Keep updating your priors. OK, let’s keep doing that.” Like a dog learning what “sit” means (do this, get a treat / don’t do this, don’t get a treat), neural networks are able to “learn” over iterations, by adjusting the software model’s weights and thresholds.

Do this enough times, and what you end up with is a trained model that’s able to “recognize” patterns in the input data, outputting predictions, or labels, or anything you’d like classified.

A neural network, and in particular the special type of multi-layered network called a deep learning system, is “trained” on a very large, well-labeled dataset (i.e., with inputs and correct labels.) The training process uses Hinton’s “backpropagation” idea to adjust the weights of the various neuron thresholds in the statistical model, getting closer and closer to “learning” the underlying pattern in the data.

For much more detail on Deep Learning and the mathematics involved, see this excellent overview:

Deep Learning Revolutionizes AI Art

We’ll rely heavily upon this background of neural networks and deep learning for Part II: The Diffusion Revolution. The AI revolution uses deep learning networks to interpret natural language (text to meaning), classify images, and “learns” how to synthetically build an image from random noise.

Gallery

Before leaving, here are a few more images created from text prompts on Midjourney:

God of storm clouds over the horizon, Midjourney

All these images were created via Midjourney engine

You get the idea. We’ll check in on how deep learning enabled new kinds of generative approach to AI art, called Generative Adversarial Networks, Variable Autoencoders and Diffusion, in Part II: Engines of Wow: Deep Learning and The Diffusion Revolution, 2014-present.