Here’s how I was able to go from idea to launched product in just two weeks using “vibe-coding” with Claude Code – from initial concept to fully-featured SaaS platform. This development story showcases the power of AI-assisted programming, where a simple idea for combining customer feedback polls with QR code technology transformed into a complete business solution. PollQR.com offers a free QR Code Generator alongside premium polling features, enabling businesses to create instant feedback loops with their customers.

Read MoreTag: ai

Engines of Wow, Part III: Opportunities and Pitfalls

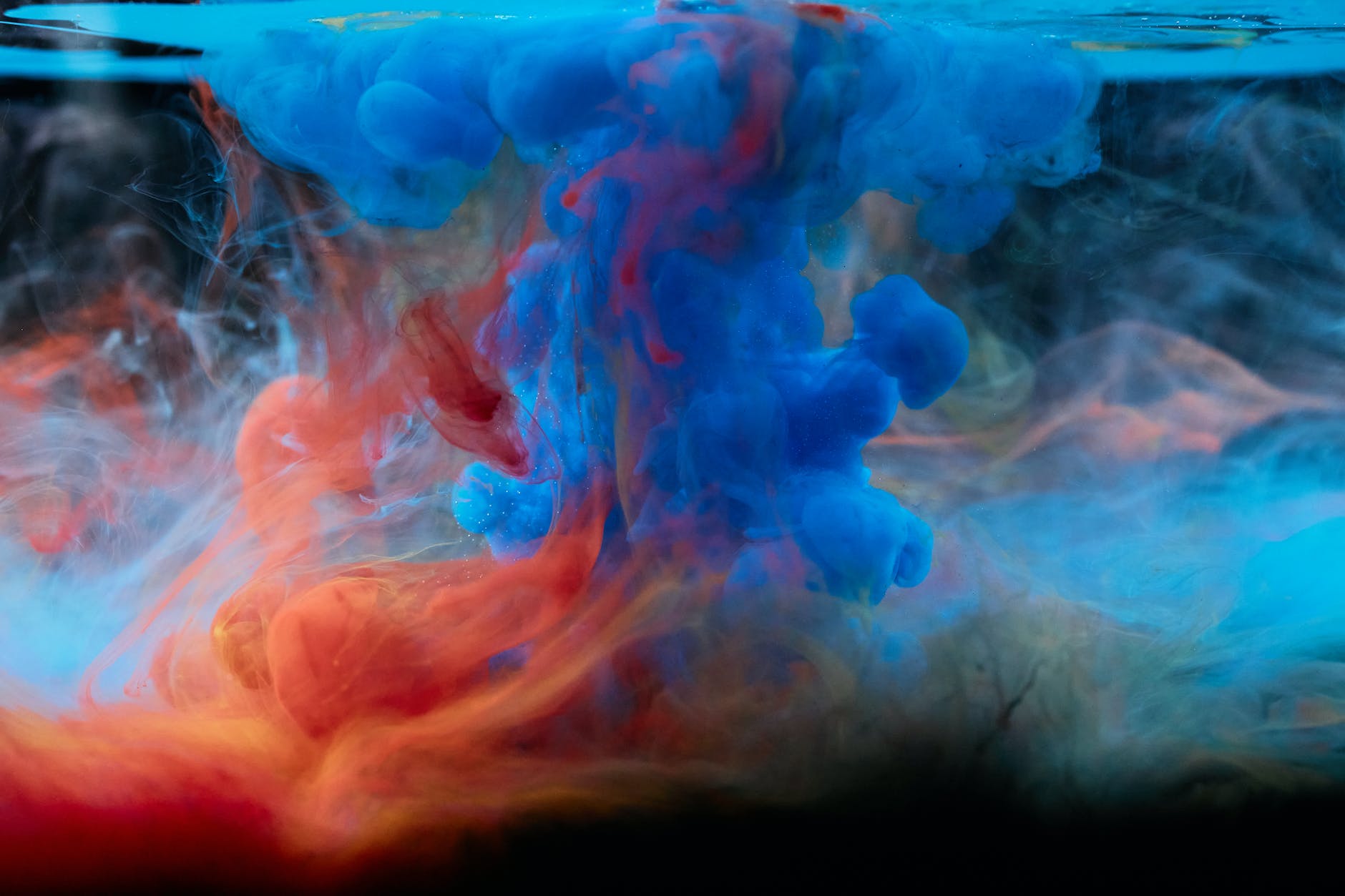

This is the third in a three-part series introducing revolutionary changes in AI-generated art. In Part I: AI Art Comes of Age, we traced back through some of the winding path that brought us to this point. Part II: Deep Learning and The Diffusion Revolution, 2014-present, introduced three basic methods […]

Read MoreEngines of Wow: Part II: Deep Learning and The Diffusion Revolution, 2014-present

A revolutionary insight in 2015, plus AI work on natural language, unleashed a new wave of generative AI models.

Read MoreEngines of Wow: AI Art Comes of Age

Advancements in AI-generated art test our understanding of human creativity and laws around derivative art.

Read More